This week’s post was written by Dr Kasra Sabermanesh, Rothamsted Research.

I am a post-doctoral research scientist within Rothamsted Research’s BBSRC-funded 20:20 Wheat® program, which aims to provide the knowledge base and tools to increase the UK wheat yield potential from 8.4 to 20 tons of wheat per hectare within the next 20 years. Field phenotyping is one component of this program and facilitates the non-destructive monitoring of field-grown crops. Traditional methods of field phenotyping require huge human effort, which consequently limits the accuracy, frequency, and number of different measurements that can be taken at one time. Fortunately, Rothamsted has an exciting solution to this problem.

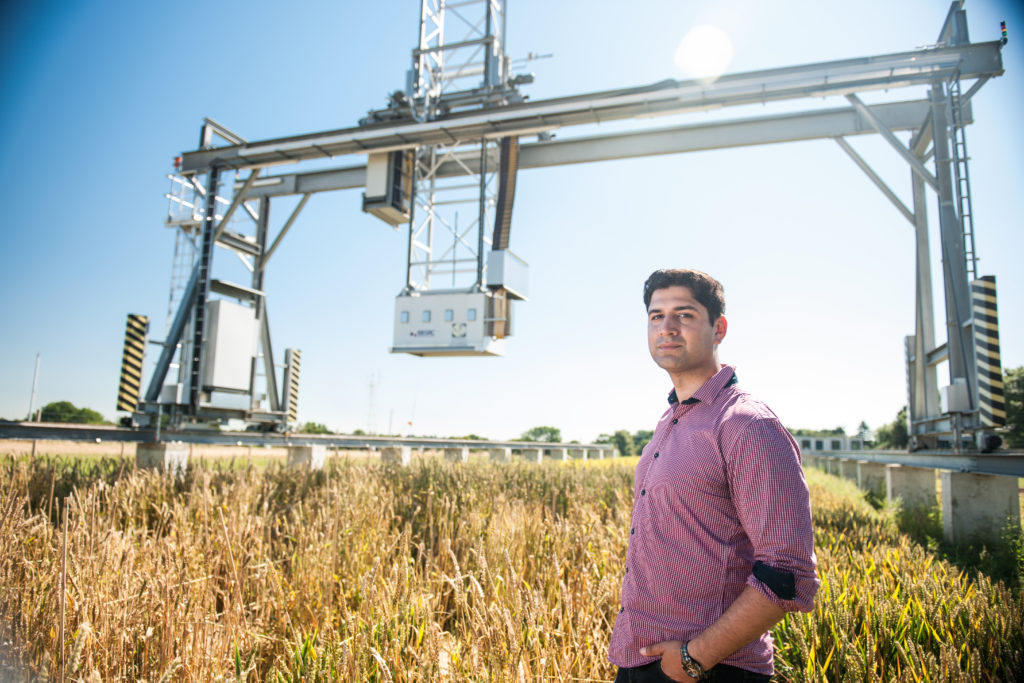

The Field Scanalyzer

Our field phenotyping platform, the Field Scanalyzer (constructed by LemnaTec GmbH and being further developed by ourselves), supports a motorized measuring platform with multiple sensors that can be accurately positioned anywhere within a dedicated field. The sensor array comprises a high-definition RGB camera, two hyperspectral cameras, a thermal infrared camera, a system for imaging chlorophyll fluorescence and twin scanning lasers for 3D information capture. Together, these sensors generate a wealth of data about crop growth, architecture, performance, and health. The Field Scanalyzer operates autonomously and in high-throughput, meaning it can take a lot of non-destructive measurements without human supervision, throughout the crops lifecycle, with high-accuracy and reproducibility. (You can read more about the Field Scanalyzer in our recent paper: http://www.publish.csiro.au/FP/pdf/FP16163).

We are currently using the Field Scanalyzer to identify new characteristics of crops that relate to performance, as well as identifying new genetic diversity for existing traits. Outputs from either of these research components can be delivered to breeders. We are screening approximately 400 wheat varieties, but also imaging some oilseed rape and oat plants.

The big data problem

The Field Scanalyzer at Rothamsted is a world first, so we initially had to develop all the necessary image acquisition protocols and image processing tools, in order to exploit its full capabilities. A number of image processing tools are available; however, they are not suitable for field-grown crops, as they were not developed for complex canopies consisting of hundreds of plants in highly dynamic ambient conditions. The platform can generate up to 100 TB data with a year’s continuous operation (using all of the sensors). That’s why I work with two other post-docs to develop robust computer vision tools to automate the way we extracting quantitative image datasets. We are also validating the accuracy of the values extracted from our images by comparing them with measurements obtained manually.

Approximately 1.5 years have passed since we first began operating the Field Scanalyzer, and we have now optimized all of our image acquisition protocols and have collected a full seasonal dataset. With the good quality images stored in our database, we have developed some robust tools to automatically extract the information about some key growth stages (ear emergence and flowering), as well as quantifying height and the number of some plant organs. We are still just scraping the surface though, and have a list of traits for which we want to develop computer vision tools, in order to automatically analyze them.

Take to the skies: Drones for data collection

Some of my colleagues work with drones (UAVs) to capture information about crop height, plant density (Normalized Difference Vegetation Index), and canopy temperature from large-scale field trials containing 5000 plots. They also fly the UAVs over our Field Scanalyzer site, so we can compare data collected from the higher flying UAV with those collected from the Field Scanalyzer at close proximity. The way we see it, UAVs can image large fields in a very short time (15 min), so if we notice something interesting using the UAV at the large plot-scale, we can put the material under the Field Scanalyzer for high-resolution phenotyping. On the other hand, with the Field Scanalyzer, once we gain a better understanding of which trait/s we need to focus on, when we should be looking at them, and exactly which sensor/s are required to quantify the trait, we can deploy drones with the necessary sensors (once the sensors are portable enough) to collect this information at field-scale and at the appropriate time.

Taking to the skies: Drones are used for large-scale phenotyping at Rothamsted. Credit: Rothamsted Research.

The future of phenotyping

I envision that the future of phenotyping technology will focus on reducing the cost and size of cameras/sensors, ultimately increasing their portability and accessibility. This will result in more sophisticated cameras being attached to UAVs (as many of sensors we currently use far out-weigh a UAV’s payload). Parallel to this, research efforts are focusing on developing image processing systems that efficiently extract quantitative information about the crops from acquired images. Together, phenotyping systems such as low-flying UAVs that generate easily interpreted data outputs could be developed, which may be more widely adopted by breeders and farmers to get a deeper insight into their crop’s health and performance.